In the world of artificial intelligence, GPUs play a crucial role in enhancing computational capabilities and driving innovation forward. This article explores the significance of GPUs in AI computations and why they have become an indispensable tool for researchers, engineers, and developers alike. By harnessing the immense parallel processing power of GPUs, AI systems are able to perform complex calculations and handle massive amounts of data efficiently, paving the way for groundbreaking advancements in various fields. Let’s delve into the fascinating world of GPUs and discover how they are revolutionizing the realm of artificial intelligence.

Understanding AI Computation

Concept of AI

Artificial Intelligence (AI) refers to the development of computer systems that can perform tasks that typically require human intelligence, such as visual perception, speech recognition, decision-making, and problem-solving. AI computation involves complex calculations and algorithms that enable machines to learn from data, reason, and make informed decisions.

History and Evolution of AI Computation

The concept of AI dates back to the 1950s when scientists started exploring the possibility of creating machines capable of mimicking human intelligence. Over the decades, AI computation has evolved significantly with advancements in computer hardware, algorithms, and data availability. From rule-based systems to machine learning algorithms and deep neural networks, AI has made significant strides, enabling more sophisticated applications in various fields.

Current State of AI Computation

AI computation has reached new heights in recent years, thanks to advancements in technology. With the availability of vast amounts of data and the ever-increasing computational power, AI algorithms can process enormous datasets, extract patterns, and make predictions at unprecedented speeds. This has led to the emergence of real-world applications, such as autonomous vehicles, natural language processing, image recognition, and recommendation systems.

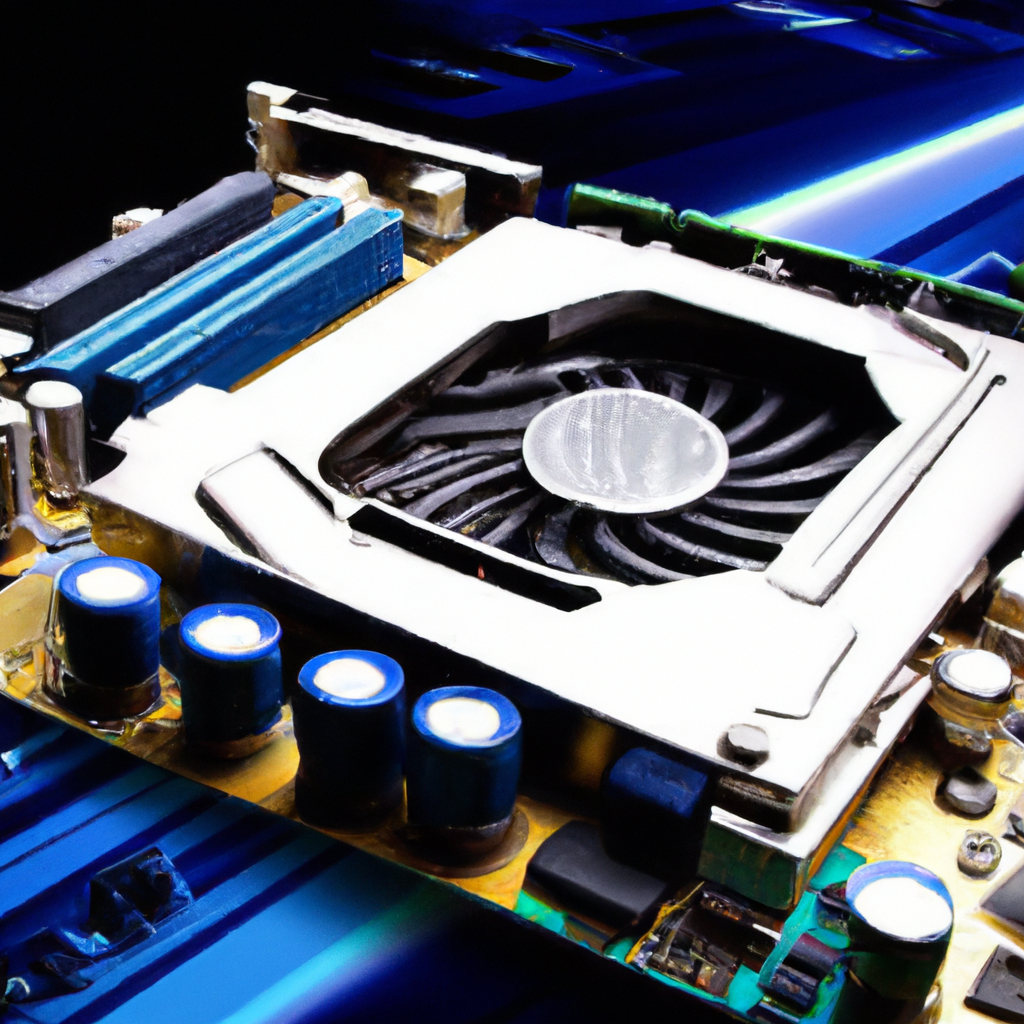

Introduction to GPU

What is a GPU?

A Graphics Processing Unit (GPU) is a specialized electronic circuit designed to handle and accelerate the rendering of visuals in computer graphics. Originally developed for graphics-intensive tasks in gaming and entertainment, GPUs have evolved to play a crucial role in AI computations. Unlike Central Processing Units (CPUs) that excel in sequential tasks, GPUs are highly parallel processing units capable of performing multiple calculations simultaneously, making them ideal for complex computational tasks.

History of GPUs

The history of GPUs can be traced back to the 1980s when graphics cards were used to accelerate computer graphics rendering. As graphics requirements significantly increased, companies like NVIDIA and AMD developed specialized GPUs to meet the growing demand. These GPUs became programmable, enabling their utilization for non-graphical tasks, including scientific simulations, data analysis, and eventually, AI computations.

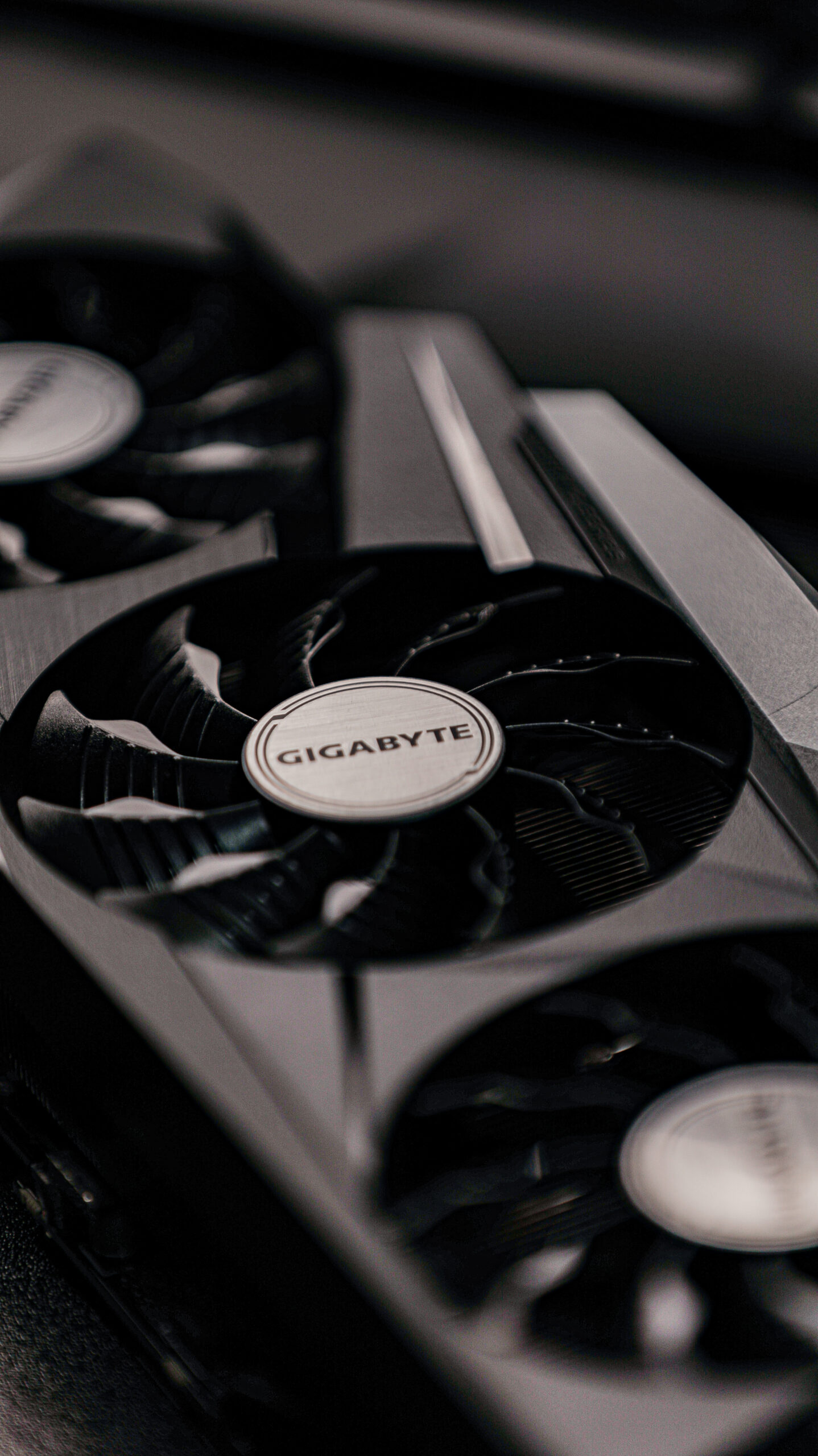

Difference Between GPU and CPU

While both GPUs and CPUs are essential hardware components of modern computers, they have distinct characteristics and functionalities. CPUs are designed for general-purpose computing, handling a wide range of tasks. They excel in executing single-threaded tasks that require sequential processing. In contrast, GPUs are tailored for parallel processing, with thousands of cores capable of running multiple calculations simultaneously. This makes GPUs highly efficient for AI computations, where massive parallelism is often required.

The Role of GPUs in AI Computation

GPU in AI Workloads

GPUs play a pivotal role in accelerating AI workloads. AI computations involve heavy matrix operations, such as matrix multiplications and convolutions, which can be parallelized and efficiently executed on GPUs. By distributing these calculations across a multitude of cores, GPUs significantly speed up the processing time of AI algorithms, enabling faster and more efficient training of models.

Speed and Efficiency of GPU in AI

The parallel processing capabilities of GPUs make them superior in terms of both speed and efficiency compared to CPUs for AI computations. With thousands of cores operating concurrently, GPUs can perform computations on large datasets in parallel, offering tremendous speedups compared to sequential execution on CPUs. Moreover, GPUs are designed to maximize power efficiency, enabling energy-efficient AI computations and reducing both time and cost.

Higher Processing Capabilities of GPU

The sheer processing power of GPUs enables them to handle complex AI computations more effectively than CPUs. Deep learning algorithms, which are widely used in AI, involve intricate calculations that require massive matrix operations and multiple layers of neural networks. By leveraging the parallelism of GPUs, these computations can be processed more quickly, allowing researchers and developers to train deep learning models with larger datasets and more complex network architectures.

Why are GPUs Important for AI?

Addressing Complex Computations

AI computations involve intricate calculations that require massive parallel processing capabilities. GPUs, with their thousands of cores, are particularly well-suited to handle the complexity and scale of these computations. Without GPUs, the computational resources required for AI tasks would be significantly limited, hindering progress in fields like computer vision, natural language processing, and autonomous systems.

Driving Innovation and Research

GPUs have played a transformative role in advancing AI research and fostering innovation. The availability of powerful GPUs has empowered researchers and developers to experiment with complex AI algorithms and explore new possibilities. By reducing computational bottlenecks and speeding up model training, GPUs have propelled breakthroughs in AI, paving the way for advancements in fields such as healthcare, robotics, finance, and more.

Enhanced Capability to Handle Data

AI relies heavily on data processing, and GPUs excel in handling large datasets. With the exponential growth of data, traditional CPUs often struggle to analyze and process information quickly enough. GPUs, on the other hand, can efficiently process vast amounts of data in parallel, enabling AI systems to extract meaningful insights and make accurate predictions in real-time. This enhanced capability to handle data is crucial for AI applications that require timely decision-making.

GPUs and Deep Learning

Understanding Deep Learning

Deep Learning is a subfield of AI that focuses on training artificial neural networks to learn from large datasets and make predictions or decisions. It involves multiple layers of interconnected neurons that mimic the human brain’s structure. Deep Learning algorithms excel in tasks such as image and speech recognition, natural language processing, and autonomous driving.

Role of GPUs in Deep Learning Algorithms

The effectiveness of deep learning algorithms heavily relies on the availability of computational resources for model training. GPUs, with their parallel processing capabilities, significantly accelerate the training process by distributing computations across numerous cores. This allows researchers and practitioners to train deep neural networks faster and experiment with larger and more complex models, leading to improved accuracy and performance.

Performance of GPUs in Neural Networks

Neural networks, the backbone of deep learning models, consist of interconnected layers of neurons that process and propagate information. GPUs’ parallel architecture allows for highly efficient execution of the matrix operations involved in neural network computations. This results in faster training and inference times, enabling real-time application of deep learning models in various domains, including computer vision, natural language understanding, and speech synthesis.

GPUs and Machine Learning

Understanding Machine Learning

Machine Learning is a subset of AI that focuses on algorithms and systems that can learn from data, identify patterns, and make predictions or decisions without being explicitly programmed. It encompasses various techniques, including supervised learning, unsupervised learning, and reinforcement learning, and finds applications across industries.

How GPUs Accelerate Machine Learning

GPUs have a significant impact on accelerating machine learning algorithms. The parallel processing capabilities of GPUs enable efficient execution of matrix operations and other computationally intensive tasks involved in training and inference. By leveraging the massive parallelism, GPUs speed up the iterative optimization processes in machine learning, allowing researchers and practitioners to train models faster and explore more complex architectures.

Use Cases of GPUs in Machine Learning

GPUs find wide-ranging applications in machine learning tasks. They are instrumental in training models for image classification, object detection, natural language processing, and recommendation systems. Furthermore, GPUs accelerate the deployment of machine learning models in real-time applications, such as fraud detection, predictive maintenance, and personalized marketing. Their speed and efficiency make them indispensable tools for machine learning practitioners.

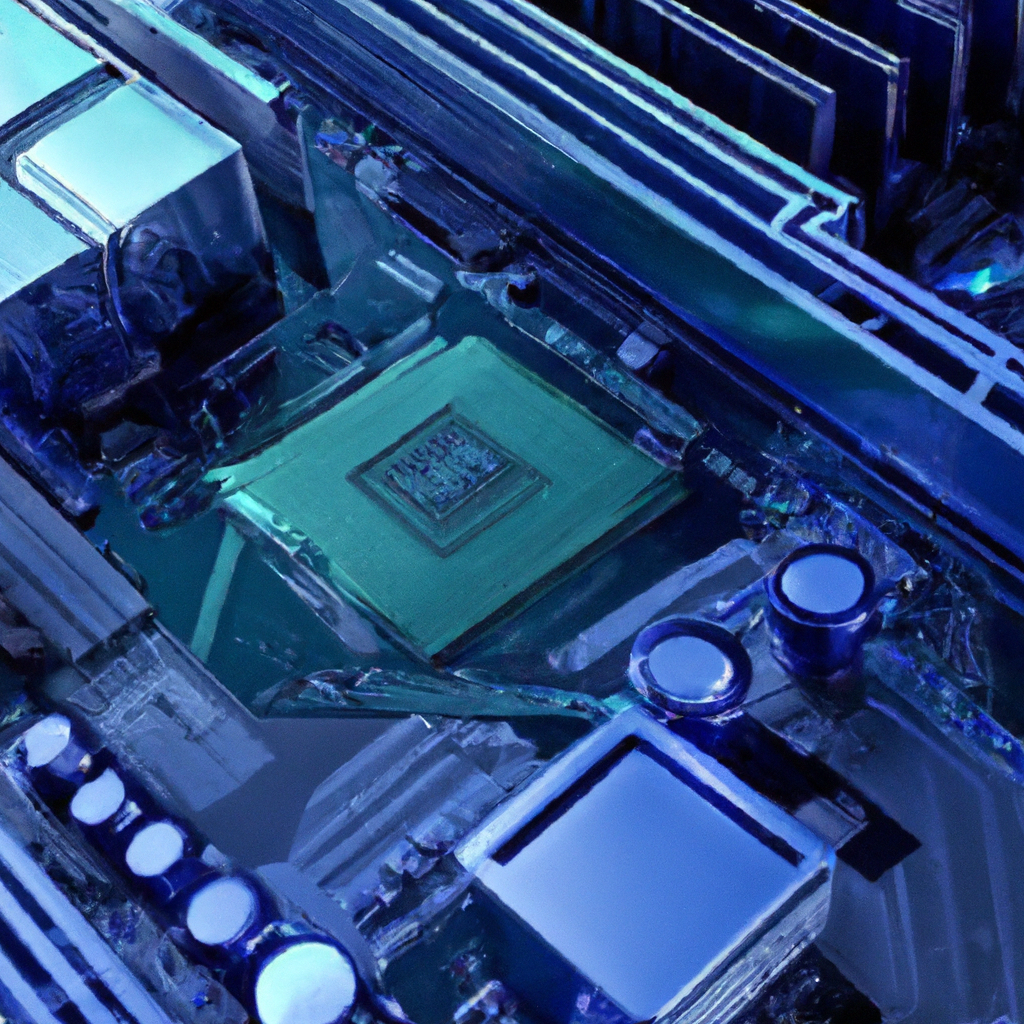

Challenges of Using GPUs in AI

Technical Limitations

Although GPUs offer significant advantages for AI computations, they are not without limitations. The power and memory limitations of GPUs can restrict the size of models that can be trained. Additionally, GPUs may not be suitable for certain types of AI computations, particularly those involving sparse computations or algorithms that heavily rely on sequential execution. Balancing the computational requirements with the constraints of GPU architecture is crucial for optimal performance.

High Initial Investment

GPU hardware comes with a notable upfront cost, especially when considering high-end GPUs that offer superior performance. This can pose a financial barrier for individuals or organizations looking to adopt GPUs for AI computations. However, the long-term benefits, such as faster training times, improved productivity, and the ability to tackle complex problems, often outweigh the initial investment.

Management of Power and Cooling

GPUs are power-hungry components that generate a significant amount of heat during operation. To ensure optimal performance and prevent overheating, proper power and cooling infrastructure must be in place. This can involve additional costs and infrastructure considerations, particularly in large-scale AI deployments or data centers. Efficient management of power and cooling is crucial to maintain the stability and longevity of GPUs.

Future of GPUs in AI

Anticipated Developments in GPU Technology

As AI computations continue to evolve and become more demanding, GPU technology is expected to advance further. We can anticipate developments such as increased core counts, improved power efficiency, and enhanced memory bandwidth in future GPU architectures. These advancements will provide even greater computational power and enable more complex AI computations, pushing the boundaries of what is possible in AI research and development.

Growing Integration of GPUs in AI

The integration of GPUs in AI is expected to grow exponentially in the coming years. As AI becomes increasingly mainstream and applications continue to expand across industries, the demand for efficient computation and accelerated training will drive the adoption of GPUs. Integrating GPUs into cloud services, edge devices, and specialized AI hardware will pave the way for pervasive AI solutions that offer scalability, accessibility, and high performance.

Potential Impact on AI Efficiency and Capability

The continued development and integration of GPUs in AI will have a profound impact on the efficiency and capability of AI systems. The ability to process and analyze large datasets quickly, train sophisticated models faster, and deploy real-time AI solutions will unlock new possibilities and drive further innovation. This will benefit industries ranging from healthcare and finance to manufacturing and transportation, revolutionizing the way we live and work.

Alternatives to GPU for AI Computations

Tensor Processing Units (TPUs)

Tensor Processing Units (TPUs) are application-specific integrated circuits (ASICs) developed by Google specifically for machine learning tasks. TPUs excel in executing neural network computations and offer higher speed and energy efficiency compared to GPUs. While TPUs are specifically designed for machine learning, they may have limited compatibility with other types of computations.

Field Programmable Gate Arrays (FPGAs)

Field Programmable Gate Arrays (FPGAs) are programmable logic devices that allow for highly customizable hardware configurations. They can be tailored to accelerate specific AI computations. FPGAs offer flexibility, low latency, and low power consumption. However, developing and programming FPGA-based solutions can be complex and require specialized knowledge.

Application-Specific Integrated Circuits (ASICs)

Application-Specific Integrated Circuits (ASICs) are customized integrated circuits designed for specific applications. ASICs can be engineered to optimize performance and power efficiency for AI computations. Due to their specialized design, ASICs can outperform both CPUs and GPUs in terms of speed and efficiency. However, ASICs often come at a higher cost and lack the flexibility of more general-purpose hardware.

Conclusion: The Role and Importance of GPUs in AI

Increasing Dependence on GPUs for AI

The role of GPUs in AI computations cannot be overstated. Their parallel processing capabilities, high performance, and energy efficiency make them vital for training complex deep learning models and executing machine learning algorithms. GPUs have become the go-to hardware for AI practitioners and researchers due to their ability to handle the computational demands of cutting-edge AI applications.

Importance of Continued GPU Development

Continued development and innovation in GPUs are crucial to keep up with the accelerating pace of AI technology. As AI workloads become increasingly complex and datasets continue to grow, more powerful and efficient GPUs are required to meet these demands. Further advancements in GPU architectures, memory technologies, and power efficiency will fuel the advancements in AI and drive new breakthroughs in various domains.

Final Thoughts on Future Implications

The role of GPUs in AI will evolve hand in hand with advancements in AI algorithms, hardware technologies, and data availability. GPUs are expected to remain a cornerstone of AI computation due to their parallel processing capabilities and ability to handle large datasets. As AI continues to transform industries and our daily lives, the importance of GPUs will only deepen, shaping the future of AI research, development, and applications.